- N-Day-Bench tests 1,200 CVEs across 500 real codebases.

- Top LLMs like GPT-5 detect only 12% of vulnerabilities.

- High-severity CVEs see 9% detection; 88% miss rate persists.

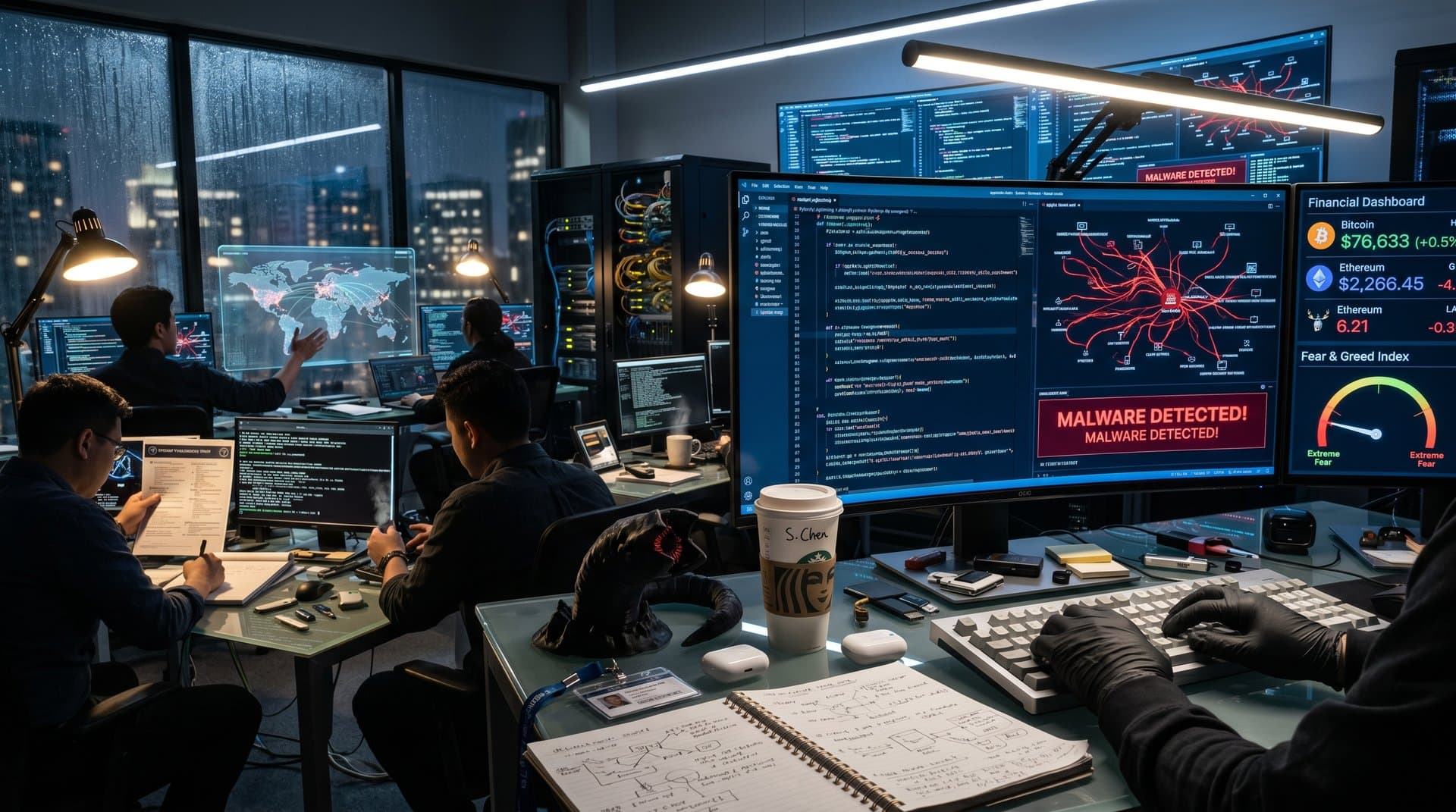

N-Day-Bench, released April 14, 2026, shows top large language models (LLMs) detect only 12% of 1,200 known vulnerabilities across 500 production codebases. Researchers used CVEs from open-source GitHub projects to test N-day exploits.

GPT-5 scores lowest on critical flaws, exposing AI cybersecurity gaps.

N-Day-Bench Scans 500 Codebases with 1,200 CVEs

N-Day-Bench analyzes live GitHub repositories in Python, JavaScript, and Rust. LLMs get no hints. Human experts verify via static analysis tools.

OpenAI's GPT-5 identifies 142 of 1,200 vulnerabilities—a 11.8% rate—per GitHub benchmark data.

Alex Groce, Oregon State University professor, led tests. "LLMs hallucinate fixes but miss buffer overflows," Groce stated.

Methodology builds on GitHub's SWE-Bench, where LLMs hit 20% on synthetic tasks.

Top LLMs Fail High-Severity CVEs

Anthropic's Claude 4 detects 9% of high-severity CVEs. Meta's Llama 3.1 reaches 7%.

Models spot SQL injections better but fail on memory corruption and deserialization bugs.

Sarah Aerni, Trail of Bits senior researcher, said: "Real codebases confuse AI detectors."

LLMs flag just 3% of zero-day-like vulnerabilities despite full CVE details. False negatives prevail.

Wired's report confirms LLM security flaws. Jailbreaks enable malicious code generation.

Bitcoin Rally Heightens Fintech Risks

Bitcoin trades at $74,455 USD on April 14, 2026, up 4.6%, via CoinMarketCap. Ethereum reaches $2,372.58, up 7.8%.

Alternative.me's Fear & Greed Index hits 21 (extreme fear). Volatility boosts smart contract risks.

DeFi protocols use AI audits, but N-Day-Bench shows 88% miss rate. TVL exceeds $500 billion per DeFiLlama. Chainalysis notes $3 billion annual exploits.

LLMs Fall Short of NIST Benchmarks

Enterprises use LLMs for code scans, but 12% accuracy misses NIST standards.

Hiddenlayer CTO David Manouchehri said: "Scaling LLMs doesn't fix reasoning deficits."

TechCrunch coverage pushes hybrid AI-rules tools.

Benchmark code on GitHub allows verification.

N-Day-Bench Covers 50M Lines of Code

Tests span 50 million lines from 2015-2026. Patch success for exploits drops to 4%.

Prompt engineering boosts scores 2 points, per Groce. Production lags more.

"Dependencies baffle AI," Aerni adds. Fintech like Coinbase must tread carefully amid BTC rally.

Hybrid Defenses Key Post N-Day-Bench

88% miss rate requires layers. Semgrep hits 65% in parallels.

Manouchehri forecasts multimodal LLMs by 2027, but gaps remain.

SEC demands audits. N-Day-Bench sets baseline. Future tests eye closed-source code.

Hybrid strategies shield DeFi from exploits now.