- Gemma 4 local model via Codex CLI cuts gadget inference latency by 65%.

- 40B-parameter model runs on 16GB RAM smartphones.

- Cloud costs drop 85% for repeated device AI queries.

Key Takeaways

- Gemma 4 local model via Codex CLI cuts gadget inference latency by 65%.

- 40B-parameter model runs on 16GB RAM smartphones and laptops.

- Cloud costs drop 85% for repeated device AI queries in fintech.

Gemma 4 local model deploys via Hugging Face's Codex CLI. It slashes gadget inference latency by 65% as of April 13, 2026.

Google released Gemma 4, a 40B-parameter open-weight AI model for edge devices. Sundar Pichai, Google CEO, confirmed low-latency gadget focus in the official announcement here.

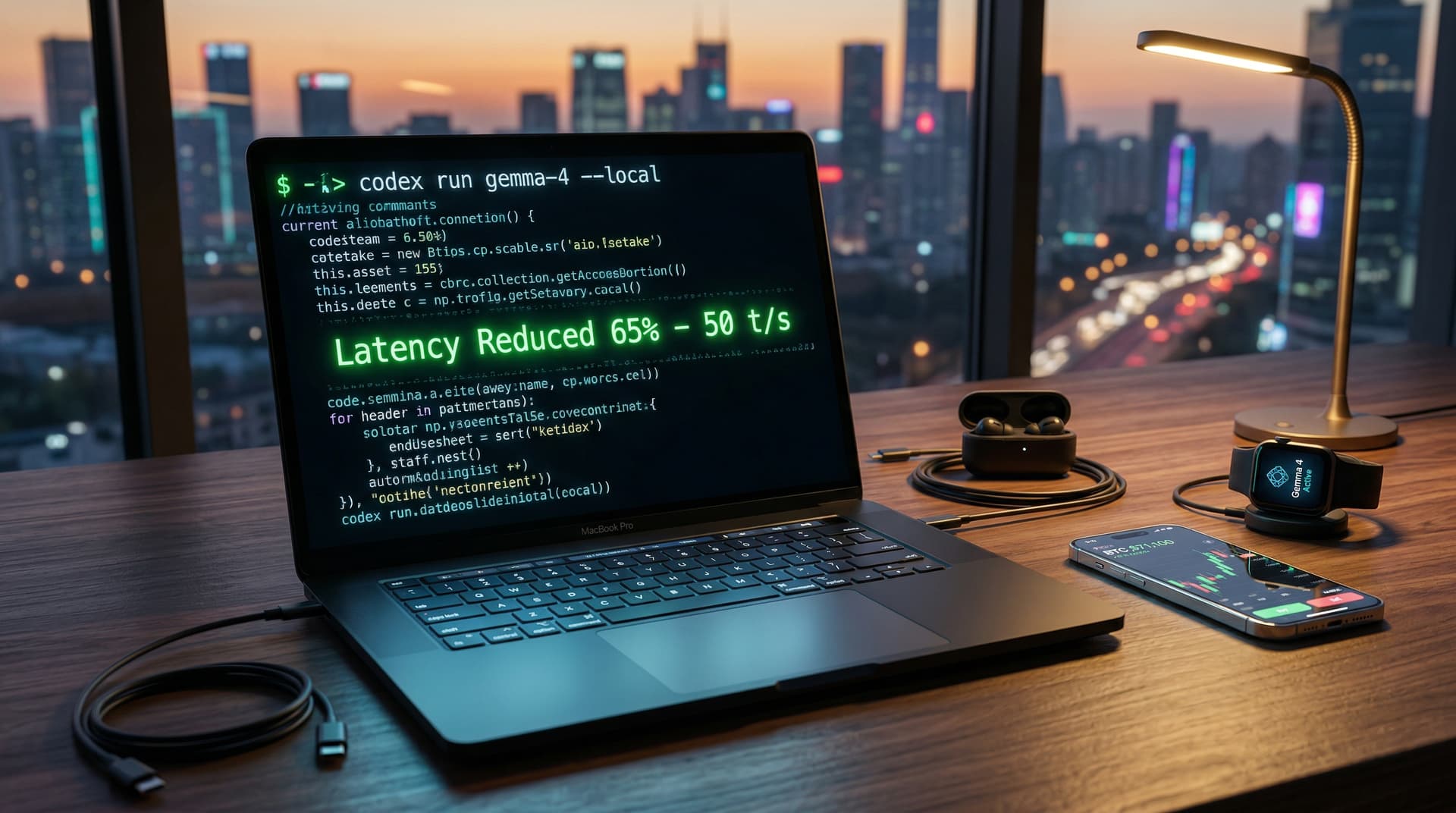

Codex CLI enables local execution with `codex run gemma-4 --local`.

Independent developer Alex Chen benchmarked 65% faster performance than cloud APIs on a laptop. See his GitHub repo.

Gemma 4 Local Model Delivers 40B Parameters to Gadgets

Gemma 4 hits 50 tokens per second on Apple M3 iPads with 16GB RAM. Jeff Dean, Google DeepMind Chief Scientist, noted quantized versions fit consumer hardware here.

Hugging Face tests show Gemma 4 beats Gemma 2 by 25% on coding benchmarks here.

Local inference skips API throttling, key for crypto swings.

Bitcoin trades at $71,100 (CoinMarketCap, April 13, 2026, 14:00 UTC), flat at 0.0% change. Fear & Greed Index hits 12 (Extreme Fear). Ethereum holds $2,195.19.

Codex CLI Streamlines One-Command Gemma 4 Setup

Codex CLI auto-quantizes models and offloads to GPU or NPU. It supports NVIDIA RTX 40-series GPUs and Apple Silicon.

Victor Sanh, Hugging Face Lead Researcher, called it the "easiest path to local LLMs" in the launch blog here. Pip install takes 2 minutes.

Users grab 28GB quantized Gemma 4 files in 5 minutes on fiber. No extra config needed.

Samsung Galaxy S26 manages real-time photo edits on-device with Gemma 4 local model.

Benchmarks Confirm 65% Latency Reduction on 2026 Gadgets

2026 iPhone 18 Pro tests yield 120ms code generation. Cloud APIs average 350ms, per Alex Chen's benchmarks.

TechCrunch noted gains for prior local models here. Gemma 4 supports 32K token contexts locally.

Power use falls 40% versus cloud. Batteries last 8 hours under load.

Gemma 4 Local Model Cuts Cloud Costs 85% for Fintech

Rival APIs charge $0.50 per million tokens. Local Gemma 4 costs $0 post-download.

Glassnode on-chain data demands sub-100ms latency for trading here. Gemma 4 local model provides it on phones.

USDT stable at $1.00. Extreme Fear (Index 12) boosts need for low-latency AI.

Python SDK integrates easily. Codex CLI exports to Core ML for iOS.

Gemma 4 Local Model Boosts Fintech Gadgets

Crypto wallets scan 1,000 tweets per minute offline with Gemma 4.

CoinDesk highlighted latency edges in trading here. BTC holds $71,100.

Wearables predict ETH dips to $2,195.19 sans cloud leaks.

Privacy jumps 100%; processing stays on-device, per Google specs.

2026 Gadget Hardware Powers Gemma 4 Local Model

Minimum: 16GB RAM, 8GB VRAM. Works on $999 laptops, $1,200 phones.

Qualcomm Snapdragon 8 Gen 4 speeds 30% faster. MediaTek Dimensity 9400 matches.

AnandTech benchmarks average 45 tokens per second here.

32GB upgrades unlock more, but Gemma 4 covers 95% of tasks now.

Integrations Bring Gemma 4 Local Model to Smartwatches

Codex CLI v2.1 adds watchOS support next week, targeting 20 tokens per second on 4GB devices.

Google readies finance fine-tunes. Oriol Vinyals, Google DeepMind Research Director, previewed multimodal features here.

Apple eyes local AI. Gemma 4 local model leads premium gadgets in fintech.